This is my own recounting of a Haitian folktale, which was given to me in Brooklyn, NY, in 2006, by Mama Lola (Marie Thérèse Alourdes Macena Champagne Lovinski, 1933–2020), a Haitian-born Mambo (priestess) in the African diasporic religious tradition of Haitian Vodou.

The analysis follows the story.

A stall-keeper lived at the edge of a forest in a one-room wattle and daub house with his wife and his children. He’d come upon hard times and was having a difficult time feeding his family.

Even when they had almost no food his wife would save the last portion of their meager meals for him, insisting she’d already eaten. When he protested, she’d touch his hand and say, “We take care of each other, oui?”

He’d reply, “Oui, cher mwen, we do.”

It was their way.

Tired of scrapping by, he decided to go deep into the forest to the foot of the great ancient mapou tree—a sacred dwelling place of spirits—and call on Papa Legba, the trickster guardian of crossroads and communication between worlds.

He approached the mapou tree, lit a bouji (candle), dropped three drops of kleren (rum) on the ground and sang:

“Legba ouvre baye a…” (Legba open the way…)

Legba, never one to ignore an earnest rele (call), stepped out of the mapou tree.

The stall-keeper asked Legba what he could do to overcome his troubles.

Legba handed the stall-keeper a simple stick and said, “here… use this stick to overcome your challenges when they arise… you’ll know when to use it. Use it with good character. If you wield it without good character, it will stop working.”

As he was heading home from the mapou tree, he came upon a widow woman whose cart was stuck in the mud. He used the stick to leverage her wagon out of the mud. She thanked him by giving him food to feed his family for a week.

The next morning, he woke before dawn, energized. The magic stick leaned against the doorway, and he grabbed it with a sense of purpose.

On the road to market, dust rising from the donkey’s hooves, the animal suddenly stopped and refused to move. The stall-keeper felt a flash of frustration—his hand tightened on the stick. He could strike the donkey’s flank, get it moving quickly.

He paused. Legba’s words echoed: “…good character.”

He walked to the front of the donkey, used the stick to tap the ground ahead, and coaxed, “Come now, we’ll walk together.” The donkey’s ears perked up and it followed, matching his pace.

They arrived at market as the first merchant stalls were opening. Because he was early—one of the first—he secured a prime spot near the main entrance where every customer would pass. By midday, he’d made more than he usually did in a week.

Walking home, coins jingling in his pocket, he thought:

“Yes, things are going well for me. Mais oui (of course). I’m clever. I have Legba’s stick after all.”

A few days later, he was feeling well-fed, successful, and full of pride. The widow woman had given him food for his family; he’d become fairly well-off from the sales in the market. Of course things were going well for him—he had Legba’s magic stick after all.

One evening after another successful day at the market he arrived at home.

He demanded, curtly, “Where is my dinner, wife?”

His wife, concerned with his behavior, set down the pot she was stirring.

“Husband,” she said gently, “you used to ask about my day. You used to play with the children before dinner. Now you come home and bark orders like we are servants. This isn’t you.”

She touched his hand the way she always had.

He snatched his hand back. “Don’t touch me. Mwen grangou (I’m hungry)”

He became enraged, his voice rising sharply, “Why are you questioning me? Why are you calling me arrogant? I have Legba’s stick after all.”

She looked at him with deep sadness and said, “It is not the stick I question, husband, but the man it has revealed.

She persisted in her line of inquiry, only enraging him. In his overwhelming anger he hit her with the stick. He needed her to stop talking, to stop looking at him that way. He told her to be quiet and stop questioning his cleverness—he had Legba’s stick after all.

The next morning he awoke, to find his wife and children gone. Within a week or two, his money had dried up. The other merchants at the market had begun calling him ‘Frè Arogans’ (Brother Arrogance), and customers avoided his stall. His sales were gone.

He decided to return to Legba to find out why the magic stick had stopped working.

He arrived at the mapou tree, to find Legba, sitting in his chair, smoking.

Legba looked up at the stall-keeper, took a long draw from his tabac, snickered, and in an unsurprised tone said:

“Mais oui…”

The stall-keeper threw the stick down to the ground at Legba’s feet.

“The stick doesn’t work anymore!”

Legba sucked his teeth, snickered again, and picked up the stick. He held it up, paused a moment to get a good look at it, and then unceremoniously tossed it onto a pile of ordinary branches at the base of the mapou tree.

Legba looked back to the stall-keeper

“Clever one…. which one is yours?” gesturing to the pile.

The stall-keeper stared. He couldn’t tell. They all looked the same.

“When you helped the widow,” Legba said, “what did the stick do?”

“It… it gave me leverage to free her cart,” the stall-keeper replied.

“And when you led your donkey?” asked Legba.

“I… I used it to lead him forward.”

“And when you struck your wife?” Legba inquired, sucking his teeth.

The stall-keeper’s face went cold. He saw it now—in each moment, he had chosen how to use it.

The widow’s gratitude, the donkey’s cooperation, his wife’s fear… none of it came from the stick.

Legba grinned and snickered. “Mais oui… The stick only works when used with good character and humility. But you see now—it was never the stick. It was always you.” Legba picked up a random branch from the pile and handed it to the stall-keeper.

“This one will work just as well… if you use it right.”

Legba and the Magic Stick: An Allegory for Ethics and AI

This Haitian folktale about a struggling stall-keeper who receives what appears to be a magic stick from Papa Legba, the trickster guardian of crossroads. The stick comes with one condition: “Use it with good character. If you wield it without good character, it will stop working.”

The stall-keeper uses it wisely at first—helping a widow, leading his stubborn donkey gently to market. Things go well. Very well. So well that he starts believing his success comes from the stick itself, not from his choices about how to use it.

“I have Legba’s stick after all,” becomes his refrain. His growing arrogance blinds him to what’s actually happening: he’s stopped making good choices. When his wife confronts his behavior, he hits her with the stick.

Within weeks, his money dries up. The other merchants nickname him Frè Arogans—Brother Arrogance. His wife and family leave. Customers avoid his stall. Confused, he returns to Legba to ask why the magic has stopped working.

Legba takes a long draw from his tobacco, snickers, and delivers the lesson:

“…the stick only works when used with good character and humility. But you see now—it was never the stick. It was always you.”

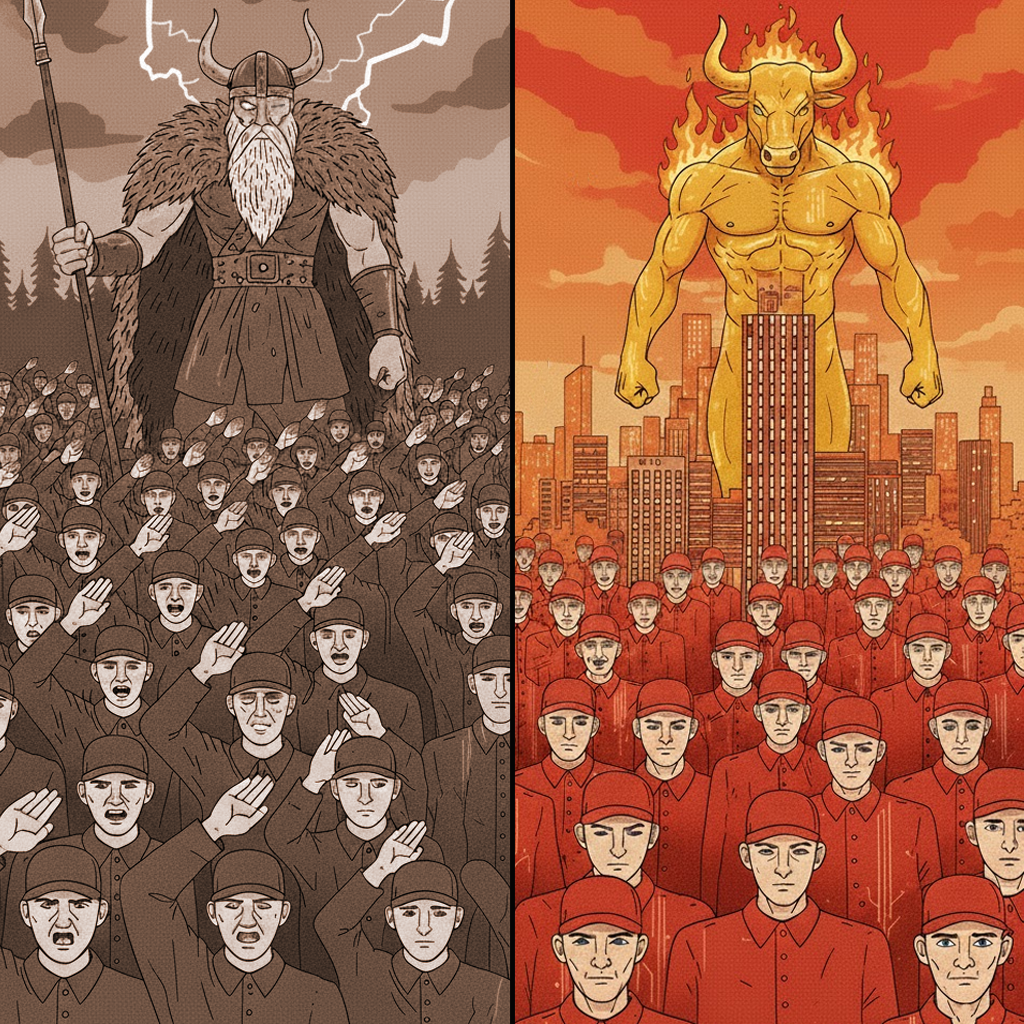

The Stick Is AI

We are the stall-keeper; AI is the stick. And we’re already deep into the story.

Like the stall-keeper, we’ve discovered a tool that seems almost magical in its capabilities. It can write, code, analyze, create, predict. Early adopters are seeing remarkable results—productivity gains, creative breakthroughs, problems solved in hours that once took weeks.

And like the stall-keeper, we’re starting to say: “We have AI after all.”

The Character Problem

The folktale reveals something we keep trying to avoid: AI isn’t a technical problem requiring technical solutions. It’s a character problem requiring moral wisdom.

The stall-keeper made three choices with his stick:

First, he helped the widow. The use case was obviously good—someone needed help, he provided it, everyone benefited. This is AI for accessibility tools, medical diagnostics, climate modeling. Clear wins that reflect good intent.

Second, he faced the lazy donkey. Should he hit it or lead it? This is where most AI decisions actually live—not in obviously evil applications, but in ambiguous choices that seem pragmatic. Should we automate this job or augment the worker? Deploy facial recognition to “increase safety” despite privacy concerns? Use AI to “optimize” hiring despite bias risks? These require moral reasoning, not just technical capability.

Third, he hit his wife with the stick and she leaves. He used the tool to silence criticism and maintain power. This is autonomous weapons, surveillance systems that oppress, algorithmic discrimination that enriches some while systematically harming others. The tool hasn’t changed—his character has.

“I Have AI After All”

The most dangerous part of the story isn’t the stick or even the bad choices. It’s the refrain: “I have Legba’s stick after all.”

This is attribution error at scale. The stall-keeper stops examining his own judgment and starts crediting the tool. His self-reflection disappears behind his confidence in the stick’s power.

We’re doing this with AI right now:

- “The algorithm decided” (No—you decided to deploy that algorithm)

- “AI-powered insights” (Insights you chose to act upon)

- “This is what the model recommends” (You selected the model, the training data, the parameters, the context)

Every time we attribute agency to AI, we’re dodging accountability for our choices. The stall-keeper believed the stick was magic. We’re believing AI is intelligent. Both beliefs serve the same function: they let us avoid examining our own character.

The Prosperity Test

Here’s what’s particularly relevant: the stall-keeper doesn’t fail during hardship. He fails during success.

When he’s struggling, he makes good choices. When things are going well, his character corrupts. Prosperity—not adversity—reveals who he really is.

We’re entering AI prosperity. Capabilities are expanding, companies are booming, competitive pressure is intense. This is precisely when the story warns us we’re most vulnerable.

The temptation isn’t to be cartoonishly evil. It’s to cut corners that seem reasonable:

- Ship before adequate safety testing because competitors are moving fast

- Ignore bias in training data because fixing it is expensive and delays launch

- Deploy in contexts we don’t fully understand because the market opportunity is enormous

- Dismiss concerns as “fear-mongering” because acknowledging them threatens our advantage

“We have AI after all” becomes justification for increasingly questionable choices. And we won’t notice our own corruption—the stall-keeper didn’t. He was genuinely confused when the stick stopped working.

Brother Arrogance

The community gives the stall-keeper a new name: ‘Frè Arogans.’ His reputation precedes him. After striking his wife, he awakes to find his family is gone. Customers avoid his stall.

Reputation is social consequence for character failure. We’re already seeing this:

- Companies known for replacing workers without consideration

- Platforms that harvest user data without meaningful consent

- Systems that perpetuate discrimination while generating profit

- Organizations that silence internal criticism about AI ethics

The social backlash isn’t anti-technology. It’s anti-arrogance. It’s people recognizing when a tool is being wielded without good character, and responding accordingly.

What Stops Working

In the folktale, the magic stops working. This isn’t arbitrary—it’s causality. Tools wielded without character eventually fail because:

- The feedback loops break down. The stall-keeper stopped listening to his wife’s concerns and drove her away. Organizations that silence ethical criticism lose their early-warning systems. By the time problems become obvious, they’re catastrophic.

- Trust evaporates. Once customers started avoiding his stall, his best location couldn’t save him. Once users distrust your AI system—because it’s failed them, harmed them, or revealed its makers’ bad faith—adoption craters regardless of technical capabilities.

- Reality reasserts itself. You can ignore character for a while, but you can’t ignore the consequences of ignoring character. Regulatory backlash, user exodus, societal pushback—these aren’t irrational responses to technology. They’re rational responses to witnessing power wielded badly.

The Return to the Crossroads

The stall-keeper returns to Legba asking a technical question: Why did the magic stop?

He still doesn’t understand. He wants a fix for the tool when the problem is his character. He’s essentially asking “How do I make the stick work again?” when he should be asking “What kind of person have I become?”

This is where we are with AI safety. We keep asking:

- How do we make AI safe?

- How do we align AI with human values?

- How do we prevent AI from causing harm?

These frame the problem as technical. But Legba’s response reframes everything: “Mais oui… The stick only works when used with good character and humility. But you see now—it was never the stick. It was always you.”

The Real Questions

If AI is the stick, and character is what matters, then the questions change:

Not: “What can AI do?”

But: “What should we do with AI?”

Not: “How powerful can we make it?”

But: “What kind of people are we while we wield this power?”

Not: “How do we make AI aligned?”

But: “How do we ensure the humans deploying AI are aligned with wisdom, humility, and good character?”

Not: “What are AI’s capabilities?”

But: “What are our responsibilities?”

Character at Scale

Here’s what the folktale ultimately teaches: powerful tools don’t change character, they reveal and amplify it.

The stick didn’t make the stall-keeper arrogant—it gave his existing capacity for arrogance a force multiplier. The stick didn’t make him violent—it made his violence more damaging.

AI works the same way. It takes human judgment—wise or foolish, careful or reckless, humble or arrogant—and gives it scale, speed, and reach it never had before.

If we have good character, AI amplifies our ability to help, heal, solve, and create.

If we lack good character, AI amplifies our ability to harm, exploit, oppress, and destroy.

The tool is neutral. We are not.

What Good Character Looks Like

The folktale gives us the answer in the first two uses of the stick:

With the widow: The stall-keeper saw someone in need and helped without calculating benefit. Good character asks “Who needs help?” before “What’s in it for me?”

With the donkey: He paused to consider two options—the easy violent one, and the harder patient one. He chose patience. Good character requires deliberation, resisting the expedient choice when it conflicts with the right one.

Applied to AI, good character looks like:

Humility – Admitting what we don’t know, can’t predict, haven’t considered. The stall-keeper’s downfall began when he started thinking he was clever. Organizations that are certain they’ve thought of everything are already corrupted.

Accountability – Never saying “the algorithm decided.” Always being willing to answer: “I chose to deploy this, knowing these risks, because I judged that…”

Moral courage – Being willing to slow down, not ship, walk away from profitable applications that would cause harm. Saying “we can, but we shouldn’t” requires courage that quarterly earnings don’t reward.

Listening to criticism – The wife tried to warn him. He hit her. Organizations that punish whistleblowers, dismiss ethicists as “not understanding the technology,” or frame all criticism as anti-progress are repeating his mistake.

Restraint – Sometimes the right choice is not to use the tool. Not every problem needs an AI solution. Not every capability should be deployed. The stick was powerful, but wisdom sometimes means setting it down.

The Crossroads We’re At

Legba is the guardian of crossroads—places of decision, where paths diverge.

The stall-keeper stood at several crossroads in this story:

- Help the widow or pass by

- Hit the donkey or lead it gently

- Listen to his wife or silence her

We’re at our own crossroads with AI. Multiple paths diverge from here:

One path: We continue attributing agency to AI, dodging accountability, moving fast and breaking things, calling criticism “fear-mongering,” optimizing for capability and market share above all else. This path leads to where the stall-keeper ended up as ‘Frè Arogans,’ avoided by customers, alienated, money dried up, confused about why things went wrong.

Another path: We acknowledge that this is a character test, not a technical challenge. We build institutions that reward moral courage, not just innovation. We slow down when wisdom requires it. We listen to the people trying to warn us. We accept that some applications we can build, we shouldn’t. We remember that the tool isn’t magic—it’s our choices that matter.

The Lesson Legba Teaches

“If you wield it without good character, it will stop working.”

This isn’t a moral platitude. It’s a prediction about consequences. Systems built and deployed without good character will eventually fail—not because of divine intervention, but because reality has feedback loops. Bad character creates bad outcomes, and bad outcomes eventually catch up with you.

The question isn’t whether AI is dangerous. The question is whether we have the character to wield something this powerful wisely.

We keep searching for technical solutions: better alignment, more robust safety measures, clearer guidelines. These matter. But they’re not sufficient. You can’t engineer your way out of a character problem.

The stall-keeper had clear instructions: use it with good character. That was always enough. The stick worked fine when he did. The problem was never the stick.

We Already Have the Answer

The most troubling part of this folktale is that the stall-keeper had to return to Legba to ask why things went wrong. He’d been told the answer at the beginning. He’d even proven he understood it—he helped the widow, he led the donkey gently.

But success made him forget. Prosperity corrupted his judgment. And the tool that amplified his good choices early on amplified his bad choices later.

We don’t need to wait for better AI to solve AI’s problems. We need better humans making better choices about how to use the AI we already have.

The stick was never magic.

AI isn’t actually intelligent.

The power was always ours.

The responsibility was always ours.

The character required was always ours to develop or fail to develop.

Legba just snickers, unsurprised, as we slowly figure out what he told us from the beginning:

It has everything to do with your intent when you use it.